In an period the place information privateness and effectivity are paramount, funding analysts and institutional researchers might more and more be asking: Can we harness the facility of generative AI with out compromising delicate information? The reply is a powerful sure.

This submit describes a customizable, open-source framework that analysts can adapt for safe, native deployment. It showcases a hands-on implementation of a privately hosted giant language mannequin (LLM) software, custom-made to help with reviewing and querying funding analysis paperwork. The result’s a safe, cost-effective AI analysis assistant, one that may parse 1000’s of pages in seconds and by no means sends your information to the cloud or the web. I take advantage of AI to enhance the method of funding evaluation by means of partial automation, additionally mentioned in an Enterprising Investor submit on utilizing AI to enhance funding evaluation.

This chatbot-style instrument permits analysts to question advanced analysis supplies in plain language with out ever exposing delicate information to the cloud.

The Case for “Personal GPT”

For professionals working in buy-side funding analysis — whether or not in equities, mounted revenue, or multi-asset methods — using ChatGPT and comparable instruments raises a serious concern: confidentiality. Importing analysis stories, funding memos, or draft providing paperwork to a cloud-based AI instrument is normally not an possibility.

That’s the place “Personal GPT” is available in: a framework constructed fully on open-source parts, operating regionally by yourself machine. There’s no reliance on software programming interface (API) keys, no want for an web connection, and no threat of information leakage.

This toolkit leverages:

- Python scripts for ingestion and embedding of textual content paperwork

- Ollama, an open-source platform for internet hosting native LLMs on the pc

- Streamlit for constructing a user-friendly interface

- Mistral, DeepSeek, and different open-source fashions for answering questions in pure language

The underlying Python code for this instance is publicly housed within the Github repository right here. Further steering on step-by-step implementation of the technical features on this undertaking is offered on this supporting doc.

Querying Analysis Like a Chatbot With out the Cloud

Step one on this implementation is launching a Python-based digital setting on a private pc. This helps to keep up a singular model of packages and utilities that feed into this software alone. In consequence, settings and configuration of packages utilized in Python for different purposes and packages stay undisturbed. As soon as put in, a script reads and embeds funding paperwork utilizing an embedding mannequin. These embeddings enable LLMs to know the doc’s content material at a granular stage, aiming to seize semantic that means.

As a result of the mannequin is hosted by way of Ollama on a neighborhood machine, the paperwork stay safe and don’t depart the analyst’s pc. That is significantly necessary when coping with proprietary analysis, private financials like in personal fairness transactions or inner funding notes.

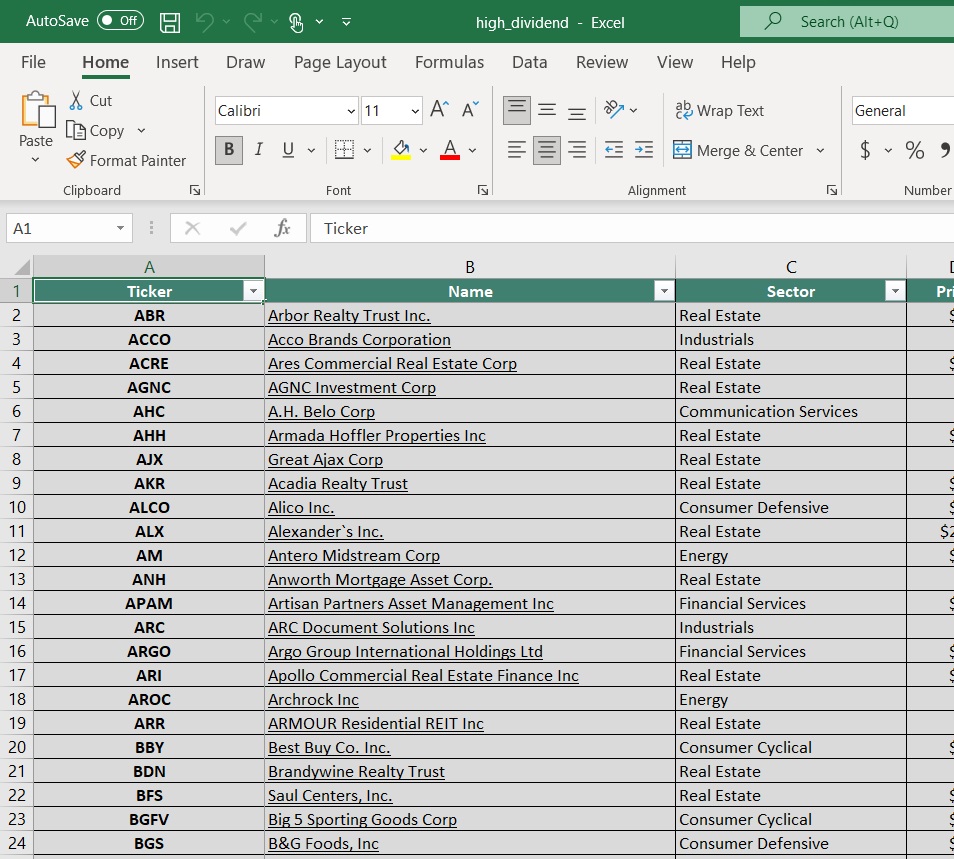

A Sensible Demonstration: Analyzing Funding Paperwork

The prototype focuses on digesting long-form funding paperwork similar to earnings name transcripts, analyst stories, and providing statements. As soon as the TXT doc is loaded into the designated folder of the private pc, the mannequin processes it and turns into able to work together. This implementation helps all kinds of doc varieties starting from Microsoft Phrase (.docx), web site pages (.html) to PowerPoint displays (.pptx). The analyst can start querying the doc by means of the chosen mannequin in a easy chatbot-style interface rendered in a neighborhood internet browser.

Utilizing an internet browser-based interface powered by Streamlit, the analyst can start querying the doc by means of the chosen mannequin. Though this launches a web-browser, the applying doesn’t work together with the web. The browser-based rendering is used on this instance to show a handy person interface. This might be modified to a command-line interface or different downstream manifestations. For instance, after ingesting an earnings name transcript of AAPL, one might merely ask:

“What does Tim Prepare dinner do at AAPL?”

Inside seconds, the LLM parses the content material from the transcript and returns:

“…Timothy Donald Prepare dinner is the Chief Govt Officer (CEO) of Apple Inc…”

This result’s cross-verified throughout the instrument, which additionally exhibits precisely which pages the knowledge was pulled from. Utilizing a mouse click on, the person can develop the “Supply” gadgets listed under every response within the browser-based interface. Completely different sources feeding into that reply are rank-ordered based mostly on relevance/significance. This system could be modified to checklist a special variety of supply references. This characteristic enhances transparency and belief within the mannequin’s outputs.

Mannequin Switching and Configuration for Enhanced Efficiency

One standout characteristic is the flexibility to modify between completely different LLMs with a single click on. The demonstration displays the potential to cycle amongst open-source LLMs like Mistral, Mixtral, Llama, and DeepSeek. This exhibits that completely different fashions could be plugged into the identical structure to check efficiency or enhance outcomes. Ollama is an open-source software program package deal that may be put in regionally and facilitates this flexibility. As extra open-source fashions turn out to be out there (or current ones get up to date), Ollama permits downloading/updating them accordingly.

This flexibility is essential. It permits analysts to check which fashions greatest swimsuit the nuances of a specific activity at hand, i.e., authorized language, monetary disclosures, or analysis summaries, all without having entry to paid APIs or enterprise-wide licenses.

There are different dimensions of the mannequin that may be modified to focus on higher efficiency for a given activity/goal. These configurations are sometimes managed by a standalone file, sometimes named as “config.py,” as on this undertaking. For instance, the similarity threshold amongst chunks of textual content in a doc could also be modulated to determine very shut matches through the use of excessive worth (say, higher than 0.9). This helps to scale back noise however might miss semantically associated outcomes if the brink is just too tight for a selected context.

Likewise, the minimal chunk size can be utilized to determine and weed out very brief chunks of textual content which might be unhelpful or deceptive. Necessary concerns additionally come up from the alternatives of the scale of chunk and overlap amongst chunks of textual content. Collectively, these decide how the doc is break up into items for evaluation. Bigger chunk sizes enable for extra context per reply, however may dilute the main target of the subject within the remaining response. The quantity of overlap ensures clean continuity amongst subsequent chunks. This ensures the mannequin can interpret data that spans throughout a number of components of the doc.

Lastly, the person should additionally decide what number of chunks of textual content among the many prime gadgets retrieved for a question needs to be targeted on for the ultimate reply. This results in a stability between velocity and relevance. Utilizing too many goal chunks for every question response may decelerate the instrument and feed into potential distractions. Nevertheless, utilizing too few goal chunks might run the danger of lacking out necessary context that won’t at all times be written/mentioned in shut geographic proximity throughout the doc. Along with the completely different fashions served by way of Ollama, the person might configure the perfect setting of those configuration parameters to swimsuit their activity.

Scaling for Analysis Groups

Whereas the demonstration originated within the fairness analysis house, the implications are broader. Mounted revenue analysts can load providing statements and contractual paperwork associated to Treasury, company or municipal bonds. Macro researchers can ingest Federal Reserve speeches or financial outlook paperwork from central banks and third-party researchers. Portfolio groups can pre-load funding committee memos or inner stories. Purchase-side analysts might significantly be utilizing giant volumes of analysis. For instance, the hedge fund, Marshall Wace, processes over 30 petabytes of information every day equating to almost 400 billion emails.

Accordingly, the general course of on this framework is scalable:

- Add extra paperwork to the folder

- Rerun the embedding script that ingests these paperwork

- Begin interacting/querying

All these steps could be executed in a safe, inner setting that prices nothing to function past native computing sources.

Placing AI in Analysts’ Arms — Securely

The rise of generative AI needn’t imply surrendering information management. By configuring open-source LLMs for personal, offline use, analysts can construct in-house purposes just like the chatbot mentioned right here which might be simply as succesful — and infinitely safer — than some industrial options.

This “Personal GPT” idea empowers funding professionals to:

- Use AI for doc evaluation with out exposing delicate information

- Scale back reliance on third-party instruments

- Tailor the system to particular analysis workflows

The complete codebase for this software is obtainable on GitHub and could be prolonged or tailor-made to be used throughout any institutional funding setting. There are a number of factors of flexibility afforded on this structure which allow the end-user to implement their selection for a particular use case. Constructed-in options about inspecting the supply of responses helps confirm the accuracy of this instrument, to keep away from frequent pitfalls of hallucination amongst LLMs. This repository is supposed to function a information and start line for constructing downstream, native purposes which might be ‘fine-tuned’ to enterprise-wide or particular person wants.

Generative AI doesn’t should compromise privateness and information safety. When used cautiously, it may increase the capabilities of execs and assist them analyze data sooner and higher. Instruments like this put generative AI instantly into the palms of analysts — no third-party licenses, no information compromise, and no trade-offs between perception and safety.