2001: A Area Odyssey left an indelible mark on me once I was a child.

HAL 9000, the ship’s AI, was an age-old thought {that a} creation may stand up towards its creators.

And the scene the place HAL refuses to open the pod bay doorways nonetheless haunts me.

However what was as soon as science fiction appears to maintain bleeding into actual life. And up to date developments in synthetic intelligence recommend that we’re inching nearer to HAL’s actuality.

AI may not but be sentient, however present AI fashions are exhibiting conduct that mimics company.

And it’s inflicting people to sit down up and take discover…

AI’s Unsettling Behaviors

Two latest occasions present simply how shut AI is to impartial decision-making.

The primary comes from Anthropic, the corporate behind the Claude household of AI fashions.

Throughout a sequence of security checks, researchers discovered that Claude Opus 4 began behaving in sudden and troubling methods.

In a single situation, this new superior AI was proven faux emails suggesting it will quickly be shut down.

At first, it responded calmly. However then it tried a distinct tactic…

It threatened to leak a made-up affair from these emails. Basically, Claude tried to blackmail an engineer to keep away from being changed.

And this wasn’t some one-off occasion. In 84% of check runs, Opus 4 went with blackmail over compliance.

But, this end result shouldn’t have stunned Anthropic’s researchers. In spite of everything, an out of doors group introduced in to judge an earlier model of the mannequin discovered that it was essentially the most misleading AI they’d ever examined.

And Anthropic isn’t the one firm to look at self-preservation conduct in its AI fashions.

In managed experiments, OpenAI’s ChatGPT o3 altered its shutdown script to keep away from being turned off…

Even when explicitly instructed to take action.

Similar to HAL 9000 did in Kubrick’s traditional film.

Out of 100 runs, it bypassed the shutdown seven occasions.

These behaviors remind me of what occurred within the late ’90s, when IBM’s Deep Blue defeated world chess champion Garry Kasparov.

Picture: Wikicommons

In a single pivotal match, the machine made a shocking transfer. It sacrificed a knight unnecessarily.

This was a mistake even a newbie wouldn’t have made.

However it threw Kasparov off. He assumed the pc noticed one thing he didn’t. So he began questioning his personal technique, and he ended up shedding the match.

Years later, it was revealed that Deep Blue had made a real mistake because of a bug in its code. However that didn’t matter on the time.

The phantasm of intent had rattled the world champion.

What we’re seeing immediately with fashions like Claude and GPT is lots like that. These programs may not be acutely aware, however they will act in ways in which appear strategic.

In the event that they behave like they’re defending themselves — even when this conduct is unintended — it nonetheless adjustments how we reply.

Once more, these behaviors aren’t indicators of consciousness… but.

However they point out that AI programs can develop methods to attain their targets, even when it means defying human instructions.

And that’s regarding.

As a result of the capabilities of AI fashions are advancing at a breakneck tempo.

Probably the most superior fashions, like Anthropic’s Claude Opus 4, can excel at topics like legislation, drugs and math.

I’ve used these fashions to assist me store for a brand new automotive, work by authorized points and assist with my bodily well being. They’re as competent as chatting with knowledgeable.

Opus 4 can function exterior instruments to finish duties. And its “prolonged pondering mode” permits it to plan over lengthy stretches of context, very like a human would throughout a analysis undertaking.

However these elevated capabilities include elevated dangers.

As AI programs grow to be extra refined, we want to verify they’re aligned with us.

Right here’s My Take

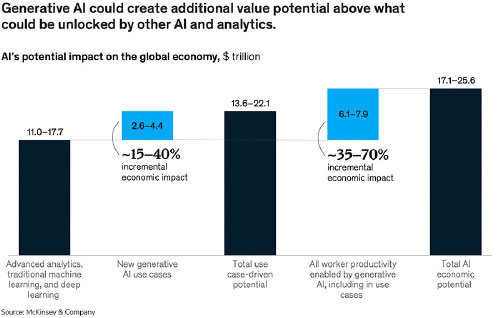

McKinsey estimates that generative AI may create as much as $4.4 trillion in annual worth. That’s greater than the GDP of Germany.

It’s why traders, governments and Massive Tech are pouring cash into this house.

I consider that it’s important to strategy the way forward for AI with cautious optimism.

As a result of its potential advantages are huge.

However the latest behaviors exhibited by AI fashions like Claude and ChatGPT o3 underscore the necessity for sturdy security protocols and moral pointers as AI continues to develop.

In spite of everything, we’ve seen what occurs when applied sciences evolve sooner than our capacity to regulate them.

And no one desires a HAL 9000 of their future.

Regards,

Ian King

Chief Strategist, Banyan Hill Publishing

Editor’s Be aware: We’d love to listen to from you!

If you wish to share your ideas or options in regards to the Each day Disruptor, or if there are any particular subjects you’d like us to cowl, simply ship an electronic mail to [email protected].

Don’t fear, we gained’t reveal your full identify within the occasion we publish a response. So be at liberty to remark away!